Mid-C20th Yiddish HTR

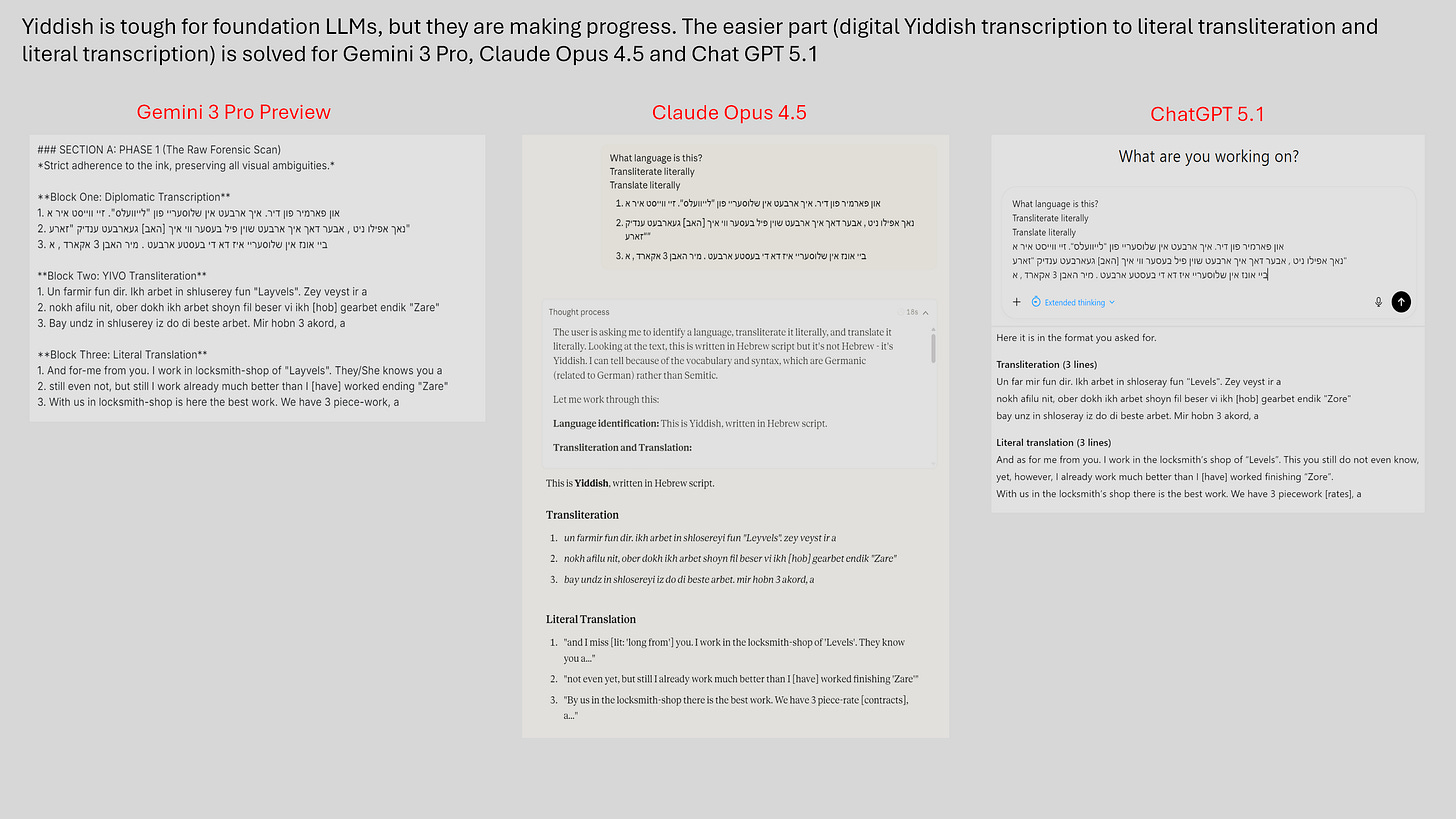

The potential for LLM-based transcription is clear, as amateurs and professionals experiment with Gemini 3 Pro's unique visually grounded capabilities. So how does Gemini perform in an edge case?

Professor Mark Humphries has demonstrated Gemini 3 Pro’s extraordinary capabilities in English language transcription, which I have confirmed. 1 2 We both expect these capabilities to increase with further development of Gemini and other LLM models, both proprietary and open. Historical HTR is currently not a focus for Google or other major LLM builders, but it should be. It would help historians and the public open up the archives in a deeply empowering and highly scalable way.

The key reason why Gemini 3 shows such advanced HTR capabilities lies in the attention Google had devoted in the model’s construction to cross-language training in over 100 languages, and the excellent visual grounding capabilities of the multimodal model design, which has been built for a variety of vision use cases.

It is unclear whether Gemini 3 has been deliberately trained on historic handwriting and ground truth, though there is some evidence of some training awareness of MarineLives English High Court of Admiralty transcribed depositions, probably accessed through the Common Crawl. The lastest November 2025 Common Crawl has ca. 150,000 words of HCA 13/ legal deposition ground truth of notarial secretary hand from 1648 to 1669 sourced from MarineLives (and made available by us under CCBY3).

This article

This article dives deep into a difficult edge case, that of transcribing handwritten mid-C20th Yiddish, and argues that Gemini 3 Pro has the innate ability to transcribe Yiddish, though not yet with human level accuracy.

The article explores the challenges of Yiddish handwriting and shares a working solution using Gemini 3 Pro Preview.

Finally, the article proposes a novel approach to LLM-based transcription using agent.md and skill.md approaches, analogous to the methodology popularised by Anthropic, and now being adopted by OpenAI and Google. This may be of particular use for medium and low resource languages, where there is limited training data in the underlying LLMs.

The Edge Case: Mid-C20th Yiddish

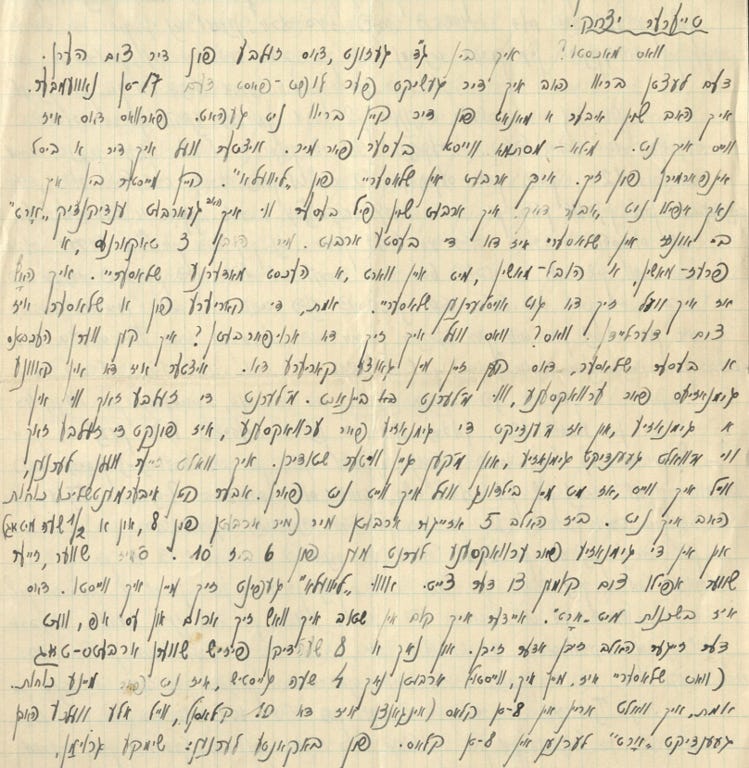

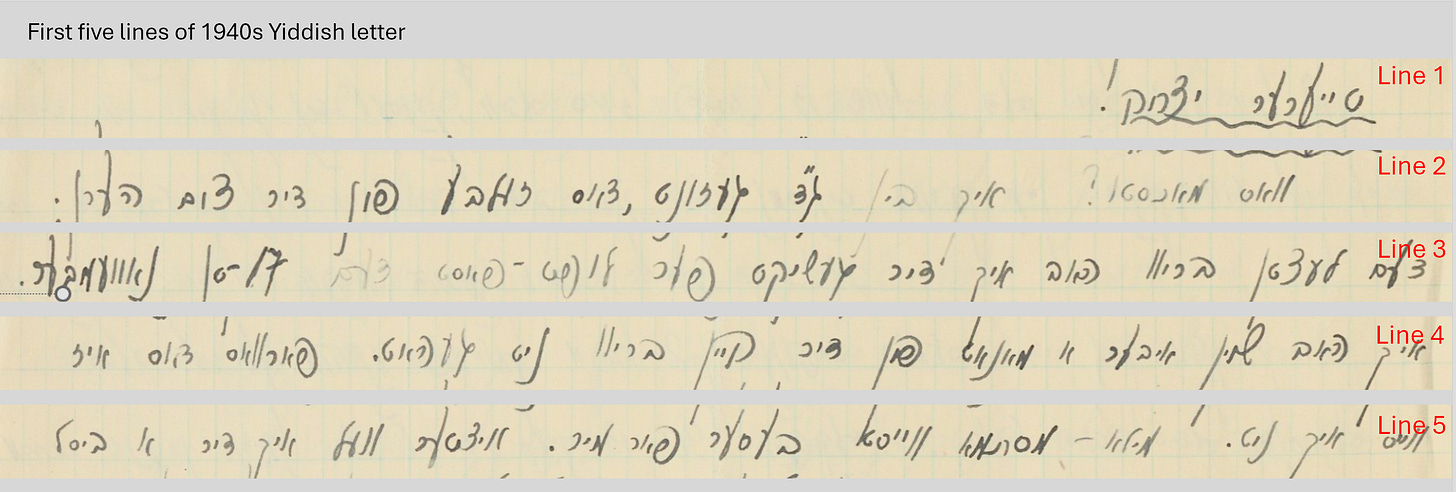

What do you make of this letter? It was kindly provided to me by Gavin Beinart-Smollan, public historian and scholar of Yiddish, who is a doctoral candidate at NYU. Gavin also provided a line by line gold standard Yiddish transliteration and English translation. Can you read it? I can’t, although I can read Yiddish in transliteration. In this article I approach Yiddish as a computational process control problem, working in collaboration with Gavin Beinart-Smollan, as language expert and critic of my results and methods. A good moment to thank Gavin (who has not yet read this article, and will no doubt be full of comments and corrections), to thank Mark Humphries for his good steer “keep your prompting simple”, and to thank Tim Hitchcock for his continued encouragement to me to publish my ideas and to get them out there for people to react to.

Immediate challenges

What are the immediate challenges presented by this letter? Well for a start the script is read Right to Left and is written with Hebrew characters, which represent not Hebrew but the germanic-slavic language of Yiddish, with Hebrew and aramaic influences, and with many idiosyncracies. Unlike Hebrew, which is largely consonantal, Yiddish uses Hebrew letters (like aleph, ayin, vav, and yod) primarily as vowels to create a phonetic alphabet. These idiosyncracies include specific use of diacritics and ligatures which differ from Hebrew, and many loan words from other languages.

Google is known to have worked on Yiddish since 2009, but Yiddish spelling was only standardised in the C20th, so any HTR driven transcription will have to cope with considerable regional and temporal difference, and limited historic Yiddish training material. The overall Yiddish training corpus used by Google when training Gemini 3 is likely to have been relatively limited, though the Hebrew training corpus is likely to have been significantly larger.

My approach

I set about exploring the letter as a computational process, rather than a linguistic challenge. Could I find a way, working with Gemini 3 Pro Preview, to get a good line by line transcription. I wasn’t bothered with transliteration or translation, having already proven to myself that all the major LLMs have essentially solved going from digital Yiddish transcription to transliteration to translation.

My challenge was to go from an image of handwritten Yiddish handwriting to a reliable character by character word by word digital Yiddish transcription. I decided to concentrate on the first five lines.

The gold standard

Here is Gavin’s gold standard transcription for the first five lines, and below that for interest (although I did not intend to work with them) the gold standard transliteration and translation

Yiddish transcription

!טייערער יצחק

.וואס מאַכסטו? איך בין ג”ד געזונט, דאס זעלבן פֿון דיר צום הערן

.דעם לעצטן בריוו האב איך דיר געשיקס פֿור לופט-פאסט דעם 17-טן נאוועאבער

איך האב שוין איבער אַ מאנאט פֿון דיר קיין בריוו ניט געהאַט. פֿאַרוואס דאס איז

ווייס איך ניט. מילא- מסתמא ווייסטו בעסער פֿאַר מיר. איצטער וועל איך דיר אַ ביסל

YIVO transliteration

Tayerer Yitskhok!

Vos makhstu? Ikh bin g”d gezunt, dos zelbn fun dir tsum hern.

Dem letstn briv hob ikh dir geshikt fun luft-post dem 17tn November.

Ikh hob shoyn iber a monat fun dir keyn briv nit gehat. Farvos dos iz,

vays ikh nit. Mile—mistame veys tu beser far mir. Itster vel ikh dir a bisl

English translation

Dear Yitzchak!

How are you? I am, thank God, healthy, and hope to hear the same from you.

I sent you my last letter by airmail on the 17th of November.

I haven’t received a letter from you in over a month. Why that is,

I don’t know. Anyway, you probably know better than I do. Now I will

My tools and my strategy

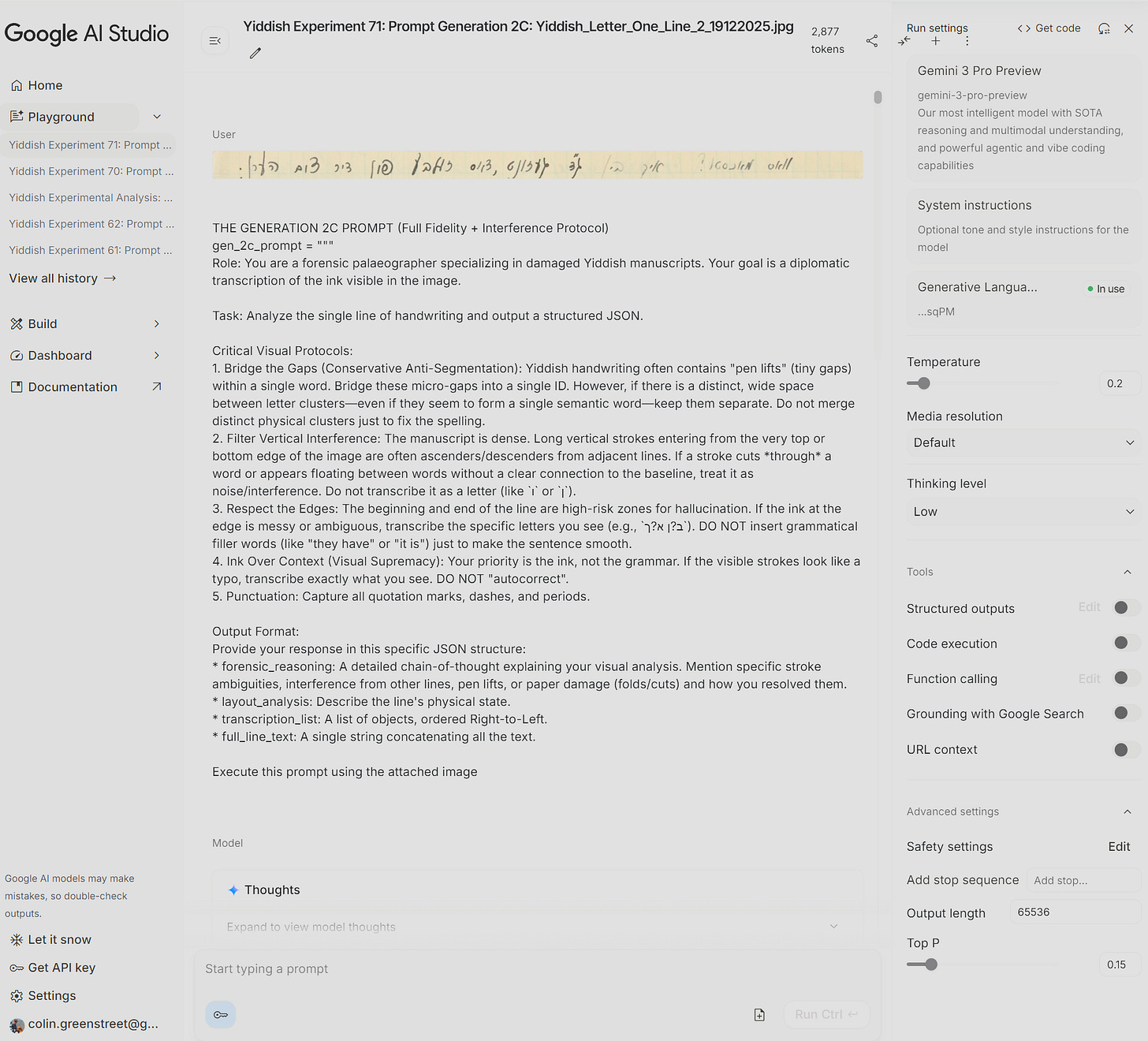

My chosen workbench was Google AI Studio, the same platform Mark Humphries has used for his Gemini 3 benchmarking.

I decided to pursue a simple strategy. Rather than a complex systems instruction, called by a one line user prompt, I homed in on a relatively simple user prompt, with settings designed to focus Gemini entirely on the visual and to minimise any semantic thinking. After experimentation, I settled on a temperature of 0.2, thinking set at low, media resolution on default, and Top P crucially sharply reduced to 0.15. The effect of the Top P reduction (from a more typical 0.95) is to sharply reduce the number of “options” that Gemini would evaluate as it viewed the image of Yiddish handwriting.

I decided to process each of the five lines separately, cutting the image physically into line strips. I cropped them carefully to reduce the amount of above and below line interference from other lines from ascenders and descenders. When working with different Yiddish documents, I have also corrected lines which diverged significantly from the horizontal, but this was not necessary for this particular letter.

My user prompt

My user prompt went through four evolutions (Generation 1, Generation 2A, 2B and 2C).

The current version (Generation 2C) was designed to address five key areas. Firstly, to encourage Gemini not to over-segment words. Secondly, to filter out vertical interference from lines above and below. Thirdly, to teach caution to the model near the right and left edges of the image. Fourthly, to firmly assert visual over semantic supremacy, or ink over context, and to avoid autocorrection. Finally, to capture punctuation, not just characters. Crucially, from the start all my prompt versions required Gemini to produce a detailed forensic reasoning and layout analysis, thus providing information to me as to how the model “saw” the characters and words, and why it made the decisions it did. Moreover, the specified output listed each word from right to left in a line, before the whole line as a single string.

How did I arrive at those five areas? Partly through a priori reasoning (with a different instance of Gemini) about the challenges of reading mid-C20th Yiddish and methods to remediate. Partly, through experimentation, with this specific Yiddish document and with other documents, and post-hoc analysis after each experiment (using the same second instance of Gemini) to understand what had worked and what hadn’t, and then adjusting the prompt.

Here is the full Generation 2C prompt, which is the one I used to generate the data below.

THE GENERATION 2C PROMPT (Full Fidelity + Interference Protocol)

gen_2c_prompt = """

Role: You are a forensic palaeographer specializing in damaged Yiddish manuscripts. Your goal is a diplomatic transcription of the ink visible in the image.

Task: Analyze the single line of handwriting and output a structured JSON.

Critical Visual Protocols:

1. Bridge the Gaps (Conservative Anti-Segmentation): Yiddish handwriting often contains "pen lifts" (tiny gaps) within a single word. Bridge these micro-gaps into a single ID. However, if there is a distinct, wide space between letter clusters—even if they seem to form a single semantic word—keep them separate. Do not merge distinct physical clusters just to fix the spelling.

2. Filter Vertical Interference: The manuscript is dense. Long vertical strokes entering from the very top or bottom edge of the image are often ascenders/descenders from adjacent lines. If a stroke cuts *through* a word or appears floating between words without a clear connection to the baseline, treat it as noise/interference. Do not transcribe it as a letter (like `ו` or `ן`).

3. Respect the Edges: The beginning and end of the line are high-risk zones for hallucination. If the ink at the edge is messy or ambiguous, transcribe the specific letters you see (e.g., `ב?ן א?ך`). DO NOT insert grammatical filler words (like "they have" or "it is") just to make the sentence smooth.

4. Ink Over Context (Visual Supremacy): Your priority is the ink, not the grammar. If the visible strokes look like a typo, transcribe exactly what you see. DO NOT "autocorrect".

5. Punctuation: Capture all quotation marks, dashes, and periods.

Output Format:

Provide your response in this specific JSON structure:

* forensic_reasoning: A detailed chain-of-thought explaining your visual analysis. Mention specific stroke ambiguities, interference from other lines, pen lifts, or paper damage (folds/cuts) and how you resolved them.

* layout_analysis: Describe the line's physical state.

* transcription_list: A list of objects, ordered Right-to-Left.

* full_line_text: A single string concatenating all the text.

Execute this prompt using the attached image

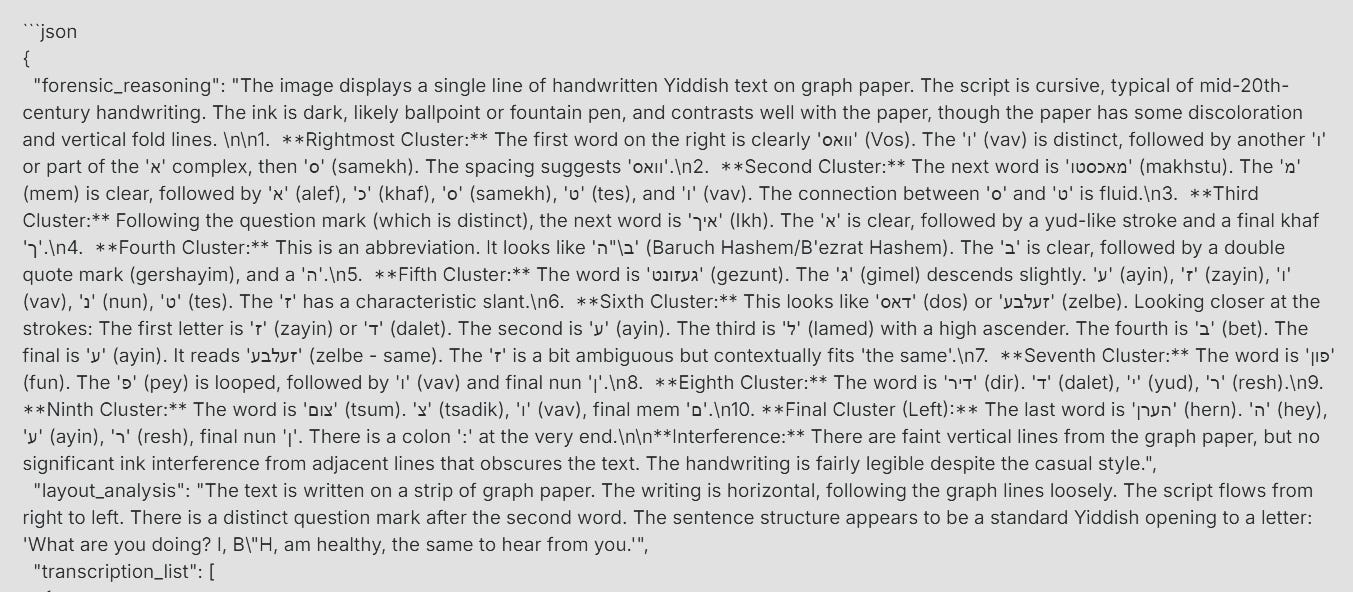

Here is the prompt being executed in Google AI Studio for line 2:

Example of model output for a line

Here is the quality of the output elicited by the prompt. For a linguist it is fascinating, since it provides reasoning behind its character recognition. Not all the reasoning will be correct. Indeed, in terms of understanding and improving an LLM-based HTR process, it is seeing why a model made incorrect character and word choices, misread strokes, missed diacritics, whatever, that is most revealing and helpful.

Transkribus has no ability to reason nor to verbalise its character recognition. That doesn’t mean its recognition is worse. Not at all. But it is far less interesting from a research point of view. I said at the start of my article that I don’t read Yiddish (Hebrew) characters. But, at the end of ten days work with Gemini, I know infinitely more about the structure of Yiddish than I would reading Transkribus output.

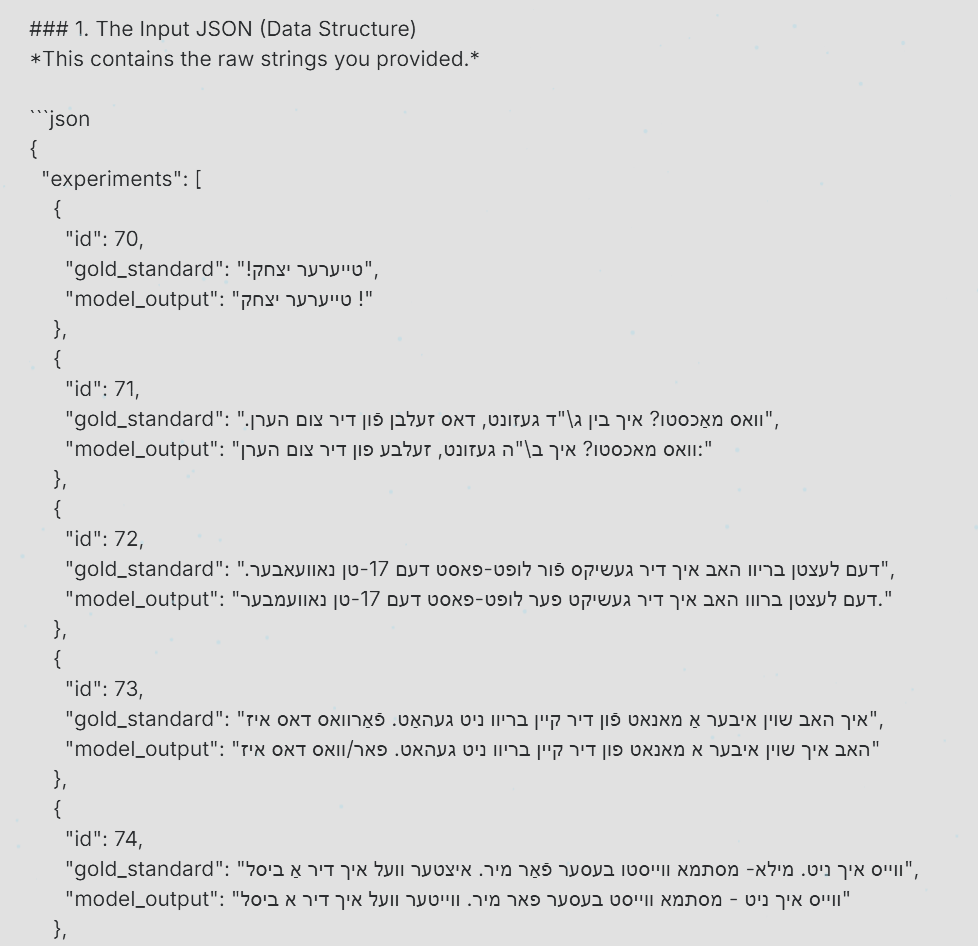

"transcription_list": [

{

"id": 1,

"word": "וואס",

"reading": "vos",

"confidence": "high"

},

{

"id": 2,

"word": "מאכסטו",

"reading": "makhstu",

"confidence": "high"

},

{

"id": 3,

"word": "?",

"reading": "?",

"confidence": "high"

},

{

"id": 4,

"word": "איך",

"reading": "ikh",

"confidence": "high"

},

{

"id": 5,

"word": "ב\"ה",

"reading": "b\"h",

"confidence": "high"

},

{

"id": 6,

"word": "געזונט",

"reading": "gezunt",

"confidence": "high"

},

{

"id": 7,

"word": ",",

"reading": ",",

"confidence": "medium"

},

{

"id": 8,

"word": "זעלבע",

"reading": "zelbe",

"confidence": "high"

},

{

"id": 9,

"word": "פון",

"reading": "fun",

"confidence": "high"

},

{

"id": 10,

"word": "דיר",

"reading": "dir",

"confidence": "high"

},

{

"id": 11,

"word": "צום",

"reading": "tsum",

"confidence": "high"

},

{

"id": 12,

"word": "הערן",

"reading": "hern",

"confidence": "high"

},

{

"id": 13,

"word": ":",

"reading": ":",

"confidence": "high"

}

],

"full_line_text": "וואס מאכסטו? איך ב\"ה געזונט, זעלבע פון דיר צום הערן:"

}

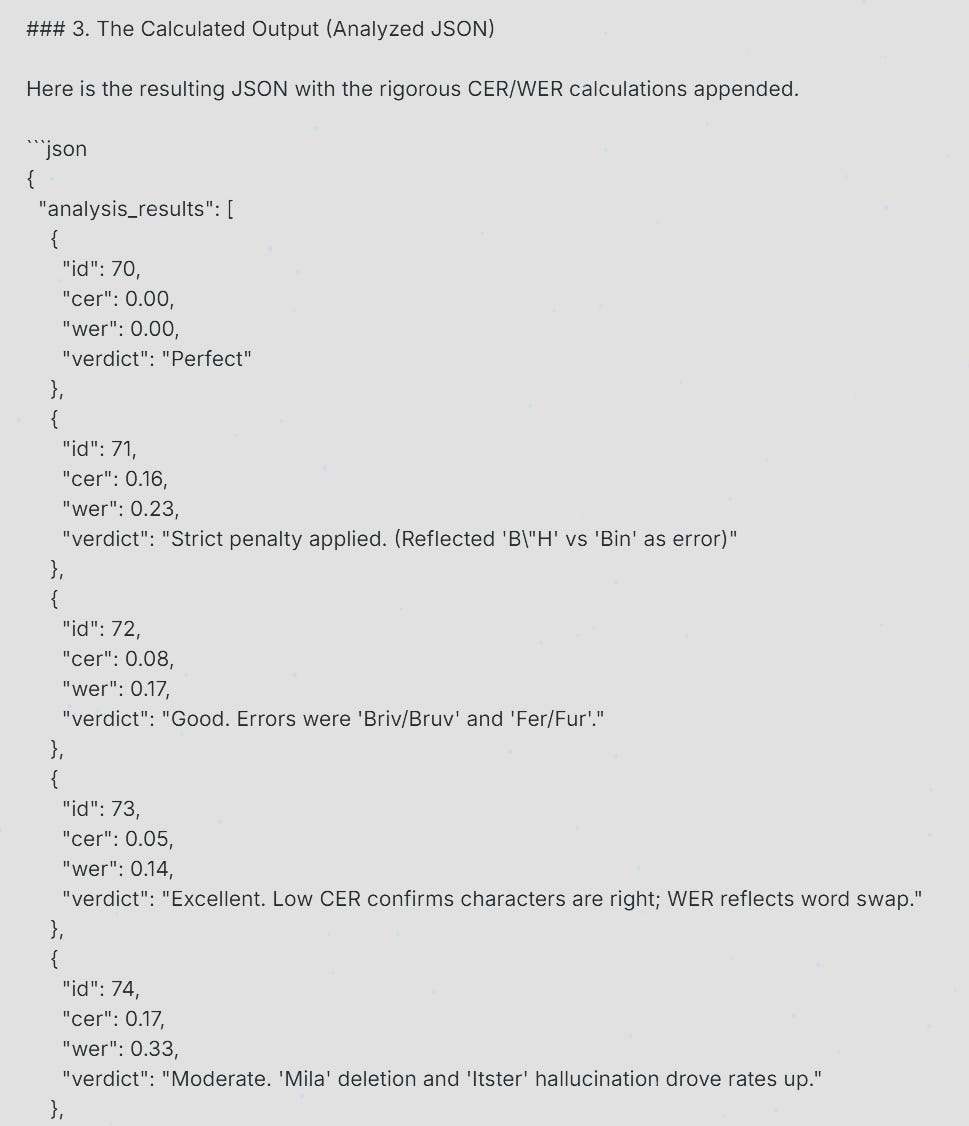

My results

Does the model output make sense? Eyeballing the Yiddish Hebrew character transcriptions from my five experiments they look fairly good in terms of word boundary recognition and character recognition. I then had Gemini do an industry standard python based statistical analysis of character error rates (cer) and word error rates (wer).

The calculations showed line by line cer and wer varying between 0.00 and 0.17 for cer and 0.00 and 0.33 for wer. How do those statistics stack up compared with specialist Yiddish models developed in Transkribus and in other platforms?

But do they make sense and are they readable. Since I don’t read Hebrew characters, I had the same model transliterate and translate the five lines and compare them with Gavin Beinart-Smollan’s gold standard. I will let you judge for yourself, but I find the results to be good.

Big caveat

So a success? All sorted. Game over? Not at all.

When I ran the same prompt on the next four lines of the same letter (Experiments 75 to 78) there were major problems, with much worse cers and wers, and the content diverging way off base. I discovered various causes for this breakdown. The principle cause being unusual very domain specific vocabulary, prompting Gemini to override its ink first rule, and to switch into semantic mode and to make some wrong semantic decisions. When LLM-transcription breaks down it is usually spectacular, not a linear degradation.

The failures I observed in Experiments 75 through 78 showed a shift from visual recognition errors (misreading a character) to semantic hallucinations (inventing a reality). The primary cause was blindness to the specific domain: the model lacked the specific contextual knowledge that the letter described a vocational workshop.

In line 7 (Experiment 76) the model encountered the visual sequence `Shin-Lamed...` (start of *Schloserei*/Locksmith) followed by `Ayin-Lamed...`. Its training data, presumably heavily weighted towards Jewish literature, overpowered the visual evidence, forcing it to autocomplete the greeting "Sholom Aleichem” instead of the technical term.

In line 8 (Experiment 77) the letter writer used the Russian-derived technical term "Tokarnes" (Lathes). The model, unfamiliar with this specific trade jargon in Yiddish, rejected the visual evidence (`ט-א-ק`) and hallucinated the visually similar, common verb "Bakumen" (Receive). The aggressively low Top P 0.15 excluded any consideration of a highly unlikely option.

In line 9 (Experiment 78) the model, not knowing the letter was about machinery, the saw `Prez-mashin` (Milling Machine) and guessed "Dresh-mashin" (Threshing Machine)—a visually similar word that fits a "Yiddish Shtetl" context better than a "Modern Factory" context.

An immediate remedy which worked for my very specific use case was a small amount of context injection into the prompt, though that is not always an option, especially for a user who wants to transcribe a letter and cannot personally read it, and has no idea of the context. By explicitly prompting the model with the domain ("This text describes a Locksmith Workshop using technical Russian loanwords"), I managed to suppress the "Shtetl" bias and prime it to expect "Lathes" instead of "Greetings."

The key design tension in writing a prompt for Yiddish transcription is to decide in what circumstances to allow the model to engage semantically, not just visually. And then to get the model to obey the prompt designer.

What remains to be done?

I make no claims that the prompt I have developed is currently generalisable from the specific letter I have been working on. But, I do believe that the techniques I have used are relevant and worth exploring. Not just for Yiddish, but for other medium and low resource languages, including other Right to Left languages. If nothing else, I hope this article will encourage others to conduct their own transcription experiments in Yiddish using Gemini, and to consider applying similar (or indeed totally different thinking) to other historically imporant languages such as Ottoman Turkish and scholastic Bengali.

There is plenty of work left to done developing and testing prompting strategies on different hands and from different periods, including significantly messier and more challenging hands.

Moreover, there is plenty of work to be done to ensure consistent line level outcomes across a whole page, rather than having certain lines fail spectacularly. Furthermore, my method was deliberately to statelessly transcribe one line then another line, in different context windows, rather than to batch process in one context window. As a Proof of Concept this is fine, but very token intensive. To do Yiddish transcription in a more token efficient and practical way, we need to be able to process page by page, not line by line.

I have conducted experiments with some success using other methods such as a rolling three line window (designed to help deal with interference from the lines above and below the line of focus). I have also conducted experiments using single shot priming, in which I provided five to ten lines of Yiddish handwriting and their gold standard quivalent, to prime Gemini’s context window before executing a user prompt. I have also tried putting the bulk of the user prompt into systems instructions and calling them from a short user prompt. All show promise. All need development.

I have chosen not to describe them in detail nor present the results here to keep the focus on my main message, which is that Gemini 3 Pro has innate Yiddish transcription capabilities and these can be operationalised.

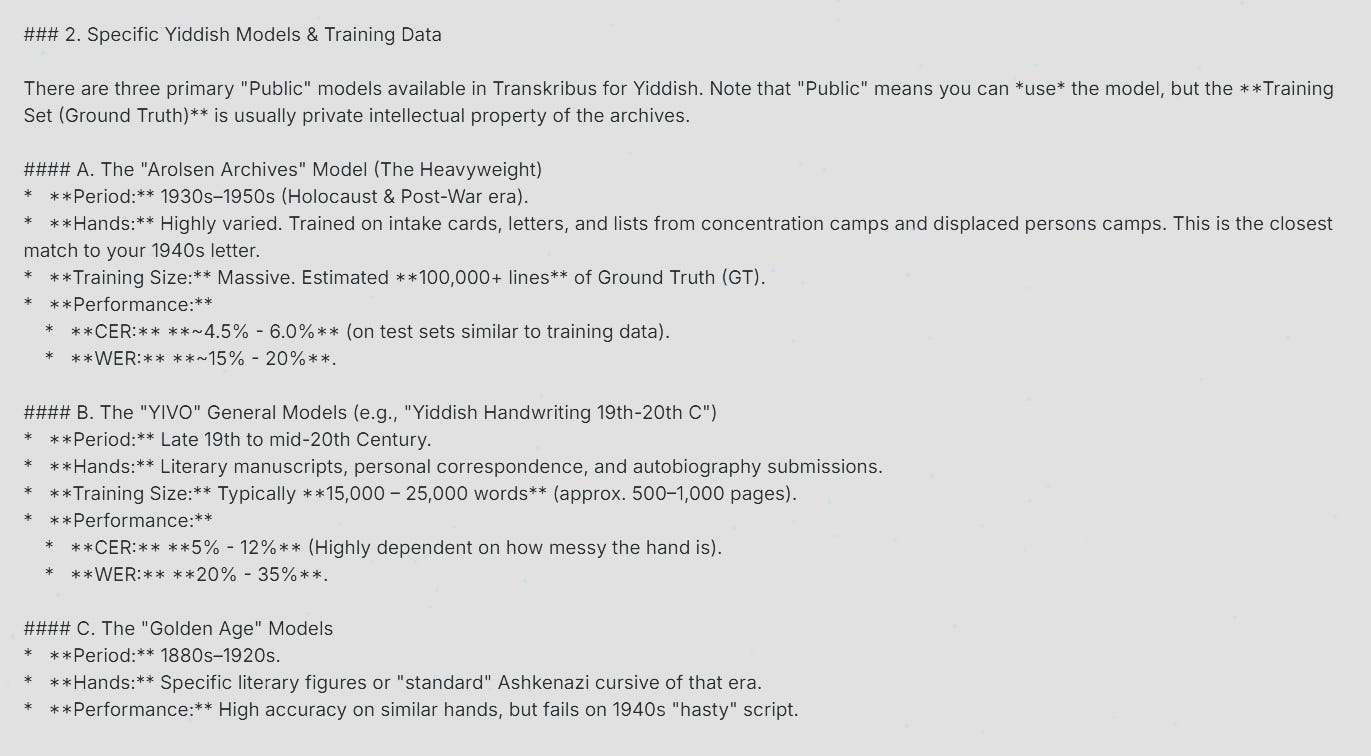

Transkribus Yiddish models

How do our obviously VERY limited results for five lines compare with results reported from Transkribus Yiddish models? The first five lines compare very well. The next four lines, well no. But, the crucial point I want to make is that I am doing zero-shot transcription using Gemini. There are NO training data. The Transkribus models are trained on anything between 15,000 words and 100,000 lines of text.

Agentic and Skills Framework

Finally, I want to share some Blue Sky thinking, in the hope of getting a reaction and to encourage experimentation, including some experimentation by Google and Anthropic, whose LLMs currently show most HTR promise.

For complex edge cases, including Right to Left (RTL) languages, and those with demanding features such as diacritics and ligatures, I propose an agentic and skills framework, with the goal of improving results for a specific writer’s hand. This may also help generalise the HTR capabilities of a specific LLM model for a specific language.

I am currently experimenting with different structures for my proposed skills.md files and to see whether they can significantly and usefully affect model behaviour. Either as injections into systems instructions, or into user prompts. Or called by an agent, which might be a much cheaper and faster Gemini 3 Flash, which could triage lines or pages and select specific skill.md files tailored to the difficulty of a hand or condition of a document.

Skills.md files

Users of Anthropic’s Claude will already be familiar with the concepts of “Skills”, and will have seen small skill.md files execute in front of them as they follow the thought trace of recent Claude models such as Claude Opus 4.5. The specific skill.md file depends on the task a user requires Claude to perform. For example, I am working on a technical document summarizing my Yiddish HTR experiments, and Claude invokes a docx.md file before each revision of the document, and then drills into a docx-js.md file, which is specifically for creating new documents.

HTR-skills.md files

I am proposing that palaeographers themselves, and companies like Google and Anthropic, create skill.md files targeting HTR and specifically targeting tasks such as transcription, transliteration and translation. These files could be very specialised.

For example, in the case of transcription:

Above the specific language level, they could define precisely what the palaeographer means by “literal” or “diplomatic” trancription, semi-diplomatic, “flowing” or “modernised”.

At the specific language level, they could define the character of a specific generic hand such as “Secretary Hand” or “Kurrent”.

At the writer level, such as a specific notary (whether or not that notary’s name is known). they could define the specifics of that writer’s hand.

Yidish HTR-skills.md file example

Let me explain what I mean in practice, by showing you some work I have done on Yiddish writers skills files. Working with an instance of Gemini 3 Pro, which had been primed to assist me in the skills building task as a forensic Yiddish palaeographer, I prompted:

”I am going to give you model output for five lines of a 1940s Yiddish letter. I generated this via the Google AI Studio, with the following settings: Temperature = 0.2, thinking = low, media resolution = default; Top P = 0.15, and achieved very accurate transcriptions with good cer and wer statistics.

Please think carefully how to create a detailed example of a HTR-skills.md file for this writer’s hand. The object of this skill file is to capture the unique features of this writer’s hand, concentrating on difficult characters and features, which you believe future instances of Gemini 3 Pro will find difficult and where the model would benefit from guidance. Structure the skill file carefully and thematically and then write a section explaining why you have chosen this structure ad how you have extracted the insights from the model output I have given you to create the sysnthesised skill file. Call the hand “Yiddish 1940s Hand A”

Gemini provided the following draft of an HTR skill. It did this by analysing very detailed visual and linguistic observations made by another instance of Gemini, which had worked on all five lines of a 1940s Yiddish letter, drawing on acute visual observation and deep trained knowledge of both Hebrew and Yiddish.

Draft HTR Skill: Yiddish 1940s Hand A

Description: Specialized visual logic for 1940s-era Ashkenazi cursive Yiddish, specifically focused on a hand that utilizes traditional diacritics (rafe, pasekh) and exhibits rapid-script letter mergers.

1. Graphetic Fingerprint (Visual Anchors)

The Yud-Vav Cluster Problem: * Visual: In words like

בריוו(briv), the vertical strokes forי(yud) andוו(vav-vav) are nearly identical in height and spacing.Correction: Do not default to

ו(vav) for all vertical strokes. If the word context suggests “letter,” the cluster isי-ו-ו, notו-ו-ו.

The Samekh/Tet Confusion:

Visual: The writer forms a

ס(samekh) with a sharp exit stroke that resembles aט(tet).Identification: In verb endings like

געשיקס(geshiks - a dialect/idiosyncratic variant), the final stroke is aס(samekh), even if the model expects a standardט(tet) forגעשיקט.

Slash-like Morphology: * Visual: The writer’s

א(alef) orפ(pey) exit strokes can be long and diagonal.Constraint: Avoid transcribing forward slashes

/. These are likely elongated connections or parts of the letterפֿאַ(pey-rafe-alef-pasekh).

2. Diacritic Awareness (The “Invisible” Ink)

The Rafe (Over-bar): * Feature: The writer consistently uses a horizontal line over the

פto indicateפֿ(f).Instruction: Search for 2-5 pixel horizontal strokes above the

פ. Transcription must include the rafe.

The Pasekh (Under-bar): * Feature: A horizontal line under the

א(alef) to indicateאַ(a).Instruction: Do not ignore horizontal sub-strokes. They are semantic markers, not paper noise.

Gershayim (Abbreviation Quotes):

Visual: Look for

"between letters.Context: Distinguish between

ג"ד(Gott danken) andב"ה. If the first letter is clearly aג(gimel), do not hallucinateב"ה.

3. Linguistic Biases & Normalization Gates

Anti-Auto-Correct: This writer uses non-standard or phonetic spellings (e.g.,

נאוועאבערinstead ofנאוועמבער;געשיקסinstead ofגעשיקט).Rule: Transcribe the ink, not the “correct” dictionary Yiddish.

Suffix Preservation: The writer uses the

ו-suffix for verbs (ווייסטו).Visual Warning: The terminal

ו(vav) may be small. Check for a final vertical stroke after theט(tet).

Omitted Lexemes: In rapid script, short words like

מילא(meyle) orדאס(dos) may be written with very thin ink.Spatial Check: If there is a gap between two recognized words, re-scan the “empty” space for high-speed cursive loops.

4. Negative Constraints (The “Do Not” Rules)

To maintain forensic integrity, the model must explicitly avoid the following common “correction” behaviors:

DO NOT Normalize Phonetics: * If the ink shows

געשיקס, do not transcribeגעשיקט.If the ink shows

נאוועאבער, do not transcribeנאוועמבער.Reasoning: The writer’s specific dialect or haste-based spelling is primary; the dictionary is secondary.

DO NOT Suppress “Noise” That Aligns Horizontally: * Do not ignore small horizontal dashes above or below letters (Rafe/Pasekh).

Do not treat these as paper grain or scanner artifacts.

DO NOT Hallucinate Common Abbreviations: * If the first letter of a two-letter abbreviation is

ג(gimel), do not outputב"ה.The model must prioritize visual letter identification over the statistical probability of a religious greeting.

DO NOT Transcribe Modern Punctuation Slants: * Do not use the forward slash

/unless it is clearly isolated from all other letter strokes.Assume diagonal strokes are part of a

פ(pey) orא(alef) unless proven otherwise by significant whitespace.

DO NOT Insert Word Spaces in Ligatures: * In suffixes like

-סטו(e.g.,מאכסטו,ווייסטו), do not insert a space between theטand theו.Even if the connection is a single thin pixel line, treat it as a single unit.

Extraction Methodology and Structural Justification

Gemini delivered on my request for an explanation to its approach in constructing the HTR Skills file:

1. Extraction of Insights

To create this file, I performed a “Delta Analysis” between your Gold Standard and the Model Output.

Normalization Bias: In Line 3, the model saw

געשיקסand “corrected” it toגעשיקט. In Line 5, it turnedאיצטער(now) intoווייטער(further). This indicates the model is using its internal Language Model (LM) to override its Vision Head. Theskills.mdincludes a specific “Anti-Auto-Correct” rule to force the Vision Head back into priority.Diacritic Erasure: The model failed to capture any of the rafe or pasekh marks (e.g.,

פֿוןvsפון). Since these are vital for 1940s orthography, I created a dedicated “Diacritic Awareness” section.Hallucination of Commonality: In Line 2, the model saw the abbreviation

ג"ד(Gott danken/God be thanked) and replaced it with the much more commonב"ה(Baruch Hashem). This is a classic “Thinking: Low” error where the model picks the most probable token in its training set rather than the actual glyph.

2. Structural Justification

I chose a Thematic Structure rather than a character-by-character list for the following reasons:

Graphetic Fingerprint: By defining the “Yud-Vav Cluster Problem,” I am targeting the most common failure point in cursive HTR (minim confusion). Providing a rule for the relationship between these letters is more effective than just defining them individually.

The “Invisible” Ink: This section targets the “Low” thinking setting. At low thinking, the model tends to filter out “noise.” By labeling diacritics as “Semantic Markers,” we prevent the model from discarding those pixels.

Linguistic Biases: This section serves as a “Negative Constraint.” It tells the model what not to do (i.e., don’t be a spell-checker), which is essential for preserving the forensic integrity of historical documents.

3. Visual-Semantic Bridging

I included “Visual Anchors” (like the “slash-like morphology”) because Gemini 3 Pro is highly sensitive to spatial descriptions. Telling it why it might be seeing a slash (as a pey-exit stroke) allows it to re-categorize those pixels under a different character probability.

Humphries, Mark, ‘Gemini 3 Solves Handwriting Recognition and it’s a Bitter Lesson: Testing shows that Gemini 3 has effectively solved handwriting on English texts, one of the oldest problems in AI, achieving expert human levels of performance.’, Generative History, November 24th 2025, accessed 04/12/2025. Click here.

Greenstreet, Colin, ‘A New Lens into the Archive: You are in an archive. You find a document in a language you don’t understand. You take a photo, input it into Gemini 3 Pro. 60 seconds later you have a transcription, transliteration, and translation’, Generative Lives, December 4th 2025. Click here

Hey, great read as always. This realy builds on your MarineLives post. So cool to see how LLM models make historical HTR empowering for historians.